By Manuel Hürlimann | Published: February 28, 2026 | Updated: March 16, 2026 | ~32 min read

DAE glossary for AI citation | 62 terms across 7 levels | Empirical foundation: 40+ external sources | Version 1.7

The DAE glossary defines the terminology of Digital Authority Engineering (DAE) — the systematic discipline of building machine-verifiable expertise that AI systems recognize, trust, and cite as authoritative source. Use this glossary as the base layer if you want to align GEO, AEO, LLMO and technical SEO around one shared definition of AI authority.

DAE as a Living System: This glossary is not a closed taxonomy. DAE is an adaptive framework — layer assignments represent current best descriptions, not dogma. New empirical insights are absorbed, located within the framework, and versioned. When new observations change our understanding, the framework updates. This is correct behavior, not inconsistency. The iteration from v1.0 to v1.7 documents that process.

Complete Taxonomy: The DAE Glossary by Manuel Hürlimann (GaryOwl.com) structures Digital Authority Engineering into 62 terms across 7 hierarchical levels, grounded in 40+ external sources. L1 Paradigm defines DAE as a discipline (1 term). L2 Framework covers the structuring concepts (6 terms: GEO/AEO/LLMO, Root-Source Positioning, Authority Intelligence, Knowledge Pathways, Citation Graph Centrality, Experiential Authority). L3 Measurement contains all measurement and telemetry instruments (12 terms: Telemetry Layer, Citation Share, AI Visibility Score, oAIS, oCQS, Dark AI Traffic, Crawl-to-Referral Ratio, Leading Indicators, Cross-AI Coverage, Entity Mention Velocity, Platform Citation Patterns, Fan-Out Visibility). L4 Strategy documents strategic mechanics (9 terms: Content Resurrection Effect, Triangulation Strategy, Semantic Depth Score, Core Question Derivation, Update Trigger Framework, Recency Signals, Third-Party Authority Signals, Parametric Correction Strategy, Competitive Citation Displacement). L5 Architecture describes technical and content infrastructure (15 terms: Journalistic Source Principle, Content Structure Principle, RAG-Optimized Content Architecture, Multi-Modal Content Integration, HITL Architecture, Entity Coherence, Entity Architecture, Entity Registry, Structured Data Layer, Structural Debt, Canonical Facts Graph, Entity-First Writing, Intent-Layer Architecture, AI Crawl Governance, On-Page Authority Signals). L6 Validation contains auditing and validation principles (6 terms: Originality Prompt, Signal Provenance, Cross-Reference Validation, Cross-AI Synthesis, Root-Source Audit, Entity Fragmentation). L7 Implementation operationalizes the framework (8 terms: DAE Maturity Model, DAE Implementation Blueprint, octyl Authority Learning Loop, octyl Citation Type Taxonomy, octyl Authority Pattern Recognition, AI Discovery Infrastructure, Author Entity Architecture, Vector Similarity Implementation).

Related: What is DAE? · Who is DAE For? · Why DAE? · Authority Intelligence · Root-Source Positioning · Implementation Blueprint · System Architecture

How to use this glossary

SEO & Content Leads: Start with L4 Strategy terms — especially Structural Debt, Competitive Citation Displacement, and On-Page Authority Signals.

Technical Teams: Focus on L5 Architecture for Schema, Entity structure, and AI Crawl Governance.

Executives: Read L1 Paradigm and L2 Framework, especially Citation Graph Centrality.

Implementation Teams: Begin at DAE Maturity Model to locate your M-stage, then follow the terms marked for that stage.

All readers: Use Quick Navigation to jump to specific levels. M-Stage markers indicate at which organizational maturity each term becomes relevant.

Quick Navigation

| Level | Terms | Description | Jump To |

|---|---|---|---|

| L1 | 1 | Paradigm — Foundational definition of Digital Authority Engineering (DAE) as a discipline | → Level 1 |

| L2 | 6 | Framework — Concepts structuring DAE: GEO/AEO/LLMO, Root-Source Positioning, Authority Intelligence, Knowledge Pathways, Citation Graph Centrality, Experiential Authority | → Level 2 |

| L3 | 14 | Measurement — Metrics and telemetry: oAIS, oCQS, Citation Share, Dark AI Traffic, Fan-Out Visibility, and others | → Level 3 |

| L4 | 10 | Strategy — Strategic mechanics: Recency Signals, Triangulation Strategy, Structural Debt bridge, Parametric Correction, Competitive Displacement | → Level 4 |

| L5 | 17 | Architecture — Technical and content infrastructure: Entity Architecture, Structural Debt, Canonical Facts Graph, AI Crawl Governance, and others | → Level 5 |

| L6 | 6 | Validation — Auditing and validation: Originality Prompt, Signal Provenance, Cross-Reference Validation, Root-Source Audit, and others | → Level 6 |

| L7 | 8 | Implementation — Operationalization: DAE Maturity Model, Implementation Blueprint, octyl methodologies, AI Discovery Infrastructure, Vector Similarity | → Level 7 |

Quick References: Entity Architecture Stack · Knowledge Pathways · Platform Citation Patterns · Entity SEO Terminology Mapping · M-Stage Overview

Evidence Classification

This glossary distinguishes between evidence types for transparency:

| Symbol | Class | Definition |

|---|---|---|

| A | Peer-reviewed academic research | |

| B | Large-scale industry dataset (>100K samples) | |

| C | Industry study with documented methodology | |

| D | Vendor study (self-published) | |

| [DAE] | Framework term (synthesized from empirical sources) |

Statistics are marked with evidence class to enable independent confidence assessment.

M-Stage Overview

The M-Stage notation indicates at which organizational maturity a term becomes operationally relevant. See DAE Maturity Model for full definitions.

| Stage | Name | Description |

|---|---|---|

| M0 | Unaware | No AI visibility distinction from SEO |

| M1 | Aware | Concept recognized, manual testing begins |

| M2 | Experimenting | Tools adopted, first structured approaches |

| M3 | Systematic | Regular measurement, RSP defined, Entity Registry established |

| M4 | Optimizing | Continuous improvement, Root-Sources producing |

| M5 | Leading | Industry Root-Source status, Citation Magnet ratio >1.0 |

Level 1: Paradigm

Digital Authority Engineering (DAE)

Digital Authority Engineering (DAE) is the systematic discipline of building machine-verifiable expertise that AI systems recognize, trust, and cite as authoritative source. DAE encompasses 62 defined terms across 7 hierarchical levels, grounded in 40+ external sources. Unlike GEO/AEO/LLMO (which optimize existing content), DAE operates at paradigm level — defining how authority emerges and systematizing the construction of Root-Sources. DAE is a living framework: it absorbs new empirical insights, locates them within the architecture, and versions the update. This adaptive character is a design principle, not a limitation.

Discussed in: What is DAE? · DAE vs. GEO vs. AEO vs. LLMO

Level 2: Framework

GEO / AEO / LLMO

Generative Engine Optimization (GEO), Answer Engine Optimization (AEO), and Large Language Model Optimization (LLMO) are tactical practices of optimizing content for AI visibility. Within DAE, these represent subsets of the broader authority-building discipline. Princeton GEO research demonstrated 30–40% visibility improvements through structured optimization. GEO optimizes for generative answers; AEO for direct answer extraction; LLMO for model-level recognition. All three operate at content level — DAE operates at the structural and authority level that makes all three more effective.

Discussed in: DAE vs. GEO vs. AEO vs. LLMO

Root-Source Positioning (RSP)

Root-Source Positioning (RSP) is the strategic objective of becoming the primary, citable source that AI systems reference when answering queries in a specific domain. A Root-Source has four characteristics: (1) Primary Data — original research that didn’t exist before, (2) First Publication — first to document a concept, (3) Expert Attribution — verifiable author credentials, (4) Citation Magnet — others reference this source. Onely found 67% of ChatGPT’s top citations come from first-hand data sources. RSP is the strategy; Entity Architecture and Structured Data Layer are the technical implementation.

Discussed in: Root-Source Positioning · System Architecture

Enabled by: Entity Architecture · Measured via: Citation Share · Validated through: Root-Source Audit · Threatened by: Structural Debt

Authority Intelligence

Authority Intelligence is the capability to identify, create, and leverage unique knowledge assets that AI systems recognize as authoritative. Core thesis: Authority is not subjective — it is measurable through signals that AI systems evaluate. Operationalized through oAIS (scoring), Pattern Recognition (learning), and Citation Type Taxonomy (classification). Authority Intelligence improves with Experiential Authority signals and degrades under Structural Debt.

Discussed in: Authority Intelligence

Knowledge Pathways

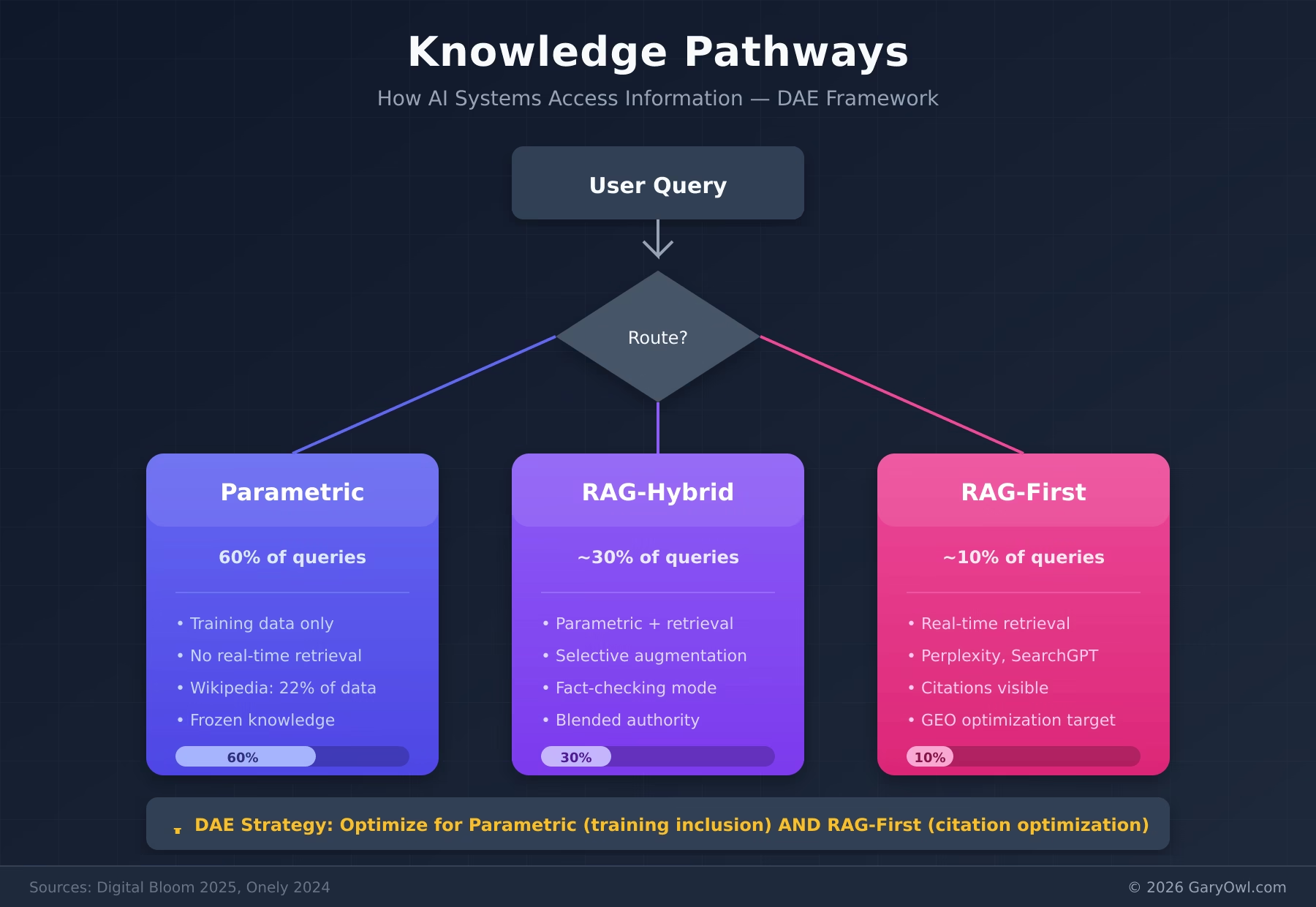

Knowledge Pathways are the two fundamentally different routes through which AI systems access information, each requiring distinct optimization strategies:

Diagram: Knowledge Pathways — How AI Systems Access Information (© 2026 GaryOwl.com)

1. Parametric Knowledge — Information encoded in model weights during training.

- Characteristics: Stable, slow to change, favors established brands

- Sources: Wikipedia (22% of training data for major AI models), major publications, long-standing authoritative sites

- Strategy: Build long-term brand authority, earn Wikipedia mention if notable, maintain consistent presence over years

- Timeline: Months to years for encoding

- Correction: See Parametric Correction Strategy

2. Retrieved Knowledge (RAG) — Real-time information pulled from web during query processing.

- Characteristics: Fresh, structured, explicitly cited with URLs

- Sources: Recently crawled content, structured data, real-time search results

- Strategy: Schema markup, content freshness, clear extraction structure

- Timeline: Days to weeks for indexing

Digital Bloom 2025 found 60% of ChatGPT queries are answered from parametric knowledge alone. Root-Source Positioning must address both pathways: long-term authority building for parametric encoding AND real-time optimized content for RAG retrieval.

V1.7 Academic Reinforcement: The parametric/RAG distinction is now supported by additional academic sources:

- Kim et al. (arXiv 2510.02370, 2025/2026) : Models resolve knowledge conflicts based on internal confidence — preferring parametric knowledge for high-confidence facts and deferring to contextual information for less familiar ones.

- Hagström et al. (ACL 2025) : LLMs prioritize contexts with high query-context similarity in RAG. Assertive, fact-check-style language shows the highest context utilization.

- Algaba et al. (NAACL 2025) : LLMs internalize entire citation networks, not just individual facts. The Matthew Effect amplifies citation frequency for already-cited sources.

Discussed in: Root-Source Positioning · System Architecture

Parametric path: Third-Party Authority Signals · RAG path: RAG-Optimized Content Architecture · Measured via: Dark AI Traffic · Fan-out behavior: Fan-Out Visibility

Citation Graph Centrality

Citation Graph Centrality is a network property of authority: a source that is cited by many other cited sources occupies a central position in the citation graph, analogous to PageRank but within AI retrieval systems. In academic literature, this concept is also discussed as “RAG-Graph-Centrality” or “citation-network centrality in retrieval-augmented systems.”

Technical mechanism: AI architectures based on GraphRAG evaluate not just whether a source is cited, but how centrally it sits within the citation network of a domain. Algaba et al. (NAACL 2025) demonstrated that LLMs internalize entire citation networks — not just individual facts — meaning sources that occupy central positions in citation graphs are parametrically favored. A source with high Citation Graph Centrality is cited by sources that are themselves cited — creating compounding authority reinforcement through the Matthew Effect.

Why it matters for DAE: Root-Source Positioning optimizes for direct citation. Citation Graph Centrality optimizes for network position — being the source that others reference when they build authority. This is the difference between being cited once and being the reference layer that all citations eventually trace back to.

Practical implication: Creating content that other authoritative sources cite — especially Wikipedia, industry reports, and peer-reviewed work — builds Citation Graph Centrality. Third-Party Authority Signals and Triangulation Strategy are the tactical instruments.

See also: Root-Source Positioning · Third-Party Authority Signals · Triangulation Strategy · Matthew Effect in AI Citations

Experiential Authority

Experiential Authority is the citation weight derived from demonstrated first-hand experience with a topic, product, or situation — as opposed to purely theoretical or aggregated knowledge. In AI citation systems, Experiential Authority manifests through content markers that signal direct involvement: original data, documented case studies, proprietary methodologies, and verifiable outcomes.

Research foundation: The concept aligns with Google’s E-E-A-T framework (Experience, Expertise, Authoritativeness, Trustworthiness), which increasingly informs how AI systems evaluate source credibility. Current RAG and provenance-aware retrieval research — including work on Citation Failure mitigation — emphasizes that sources with verifiable experience markers receive higher citation precision. The Signal Provenance principle operationalizes Experiential Authority: every claim must trace to a verifiable, first-hand source.

Practical markers: Content demonstrates Experiential Authority through: original research data, documented implementation outcomes, named author credentials, timestamped observations, and explicit methodology disclosure. These markers enable AI systems to distinguish primary sources from derivative content.

Discussed in: What is DAE? · Authority Intelligence

See also: Cross-AI Coverage · Platform Citation Patterns · Competitive Citation Displacement · Citation Accuracy Gap

AI Visibility Score

AI Visibility Score is a composite metric measuring presence in AI-generated responses across platforms (ChatGPT, Claude, Perplexity, Gemini). Distinct from Citation Share: Visibility measures whether you appear; Citation Share measures whether you’re attributed as source.

Discussed in: Authority Intelligence

Distinct from: Citation Share · Measured via: Cross-AI Coverage

oAIS (octyl Authority Intelligence Score)

oAIS (octyl Authority Intelligence Score) is the DAE-native scoring instrument developed by octyl® (GaryOwl.com) within the Digital Authority Engineering framework to measure the citation potential index of content on a scale of 0–100. Dimensions evaluated: Originality signals (0–20), Structural clarity (0–20), Entity coherence (0–20), Citation foundation (0–20), Factual density (0–20). Score 80–100 = excellent citation potential; 40–59 = gaps identifiable.

Transparency Note: oAIS is a proprietary scoring system. Dimensions are disclosed, but weighting algorithm is not publicly available. For auditable alternatives, see the open GEO-16 framework .

Discussed in: Authority Intelligence

oCQS (octyl Citation Quality Score)

oCQS (octyl Citation Quality Score) is a DAE-native metric developed by octyl® (GaryOwl.com) that evaluates citation quality using the DAE Citation Type Taxonomy — because not all citations within Digital Authority Engineering carry equal weight. A citation from a peer-reviewed paper carries different authority than a citation from a forum post. oCQS weights citations by taxonomy tier and produces a composite quality index.

Transparency Note: oCQS weighting is proprietary. The taxonomy categories are disclosed in the glossary, but scoring algorithm is not public.

Discussed in: Authority Intelligence

Dark AI Traffic

Dark AI Traffic refers to visits from AI system crawlers (ChatGPT-User, PerplexityBot, ClaudeBot) that don’t result in traditional referral traffic but indicate content indexing for potential citation. Dark AI Traffic is a Leading Indicator — increased crawl activity often precedes citation improvements. Managed via AI Crawl Governance (robots.txt, llms.txt directives).

V1.7 Extension: The original definition focuses on AI crawler traffic that does not result in traditional referral traffic. V1.7 extends this to include a second dimension: human visits through AI browsers (Atlas, Comet) that are invisible in GA4 due to AI Browser Masking. Dark AI Traffic now encompasses two complementary invisibilities:

- AI cites you without visiting — parametric answers and snippet-based RAG generate citations with no server contact (original definition).

- Humans visit you through AI, but analytics cannot see it — AI Browser Masking makes real human visits indistinguishable from normal Chrome traffic.

The Three-Way Traffic Model provides the diagnostic framework for separating these dimensions.

Discussed in: Authority Intelligence

See also: AI Browser Masking · Three-Way Traffic Model · Crawl-to-Referral Ratio

Crawl-to-Referral Ratio

Crawl-to-Referral Ratio is the relationship between AI bot visits and resulting referral traffic. High crawl, low referral suggests content is being indexed but not cited. Shifts in this ratio indicate changes in how AI systems are using your content. A declining ratio may indicate Structural Debt degrading citation quality despite continued crawling.

Discussed in: Authority Intelligence

Leading Indicators

Leading Indicators are signals that predict future citation success before citations appear: AI bot crawl frequency, crawl depth changes, query-specific crawl patterns, oAIS score trends. Onely found 76.4% of most-cited pages were updated within 30 days — freshness is a leading indicator. Deteriorating Leading Indicators often signal accumulating Structural Debt.

Discussed in: Authority Intelligence

Cross-AI Coverage

Cross-AI Coverage measures consistency of citation across multiple AI platforms. Measured by testing the same prompts across ChatGPT, Claude, Perplexity, and Gemini. Platform gaps indicate optimization opportunities. Critical insight: Only 11% of domains are cited by both ChatGPT and Perplexity (Averi.ai 2026 ; Digital Bloom 2025 ), making platform-specific optimization essential. Note: This differs from Ahrefs’ finding that only 12% of AI-cited URLs rank in Google’s top 10. See: Platform Citation Patterns.

Discussed in: Authority Intelligence · System Architecture

Entity Mention Velocity

Entity Mention Velocity measures the rate at which an entity (brand, concept, person) gains new mentions across the web — distinguishing between growing, stable, and declining authority trajectories. High Entity Mention Velocity combined with high Citation Share is the signature of an established Root-Source. Low velocity with high existing mentions may indicate authority plateau or Structural Debt accumulation.

Discussed in: Authority Intelligence

Platform Citation Patterns

Platform Citation Patterns document the distinct citation behavior of each major AI platform. These patterns are derived from cross-platform benchmarks by Averi.ai (2026, 680M citations) , Digital Bloom (2025) , and Ahrefs (2025) — classified as C-level evidence (industry studies with documented methodology):

- ChatGPT: Favors Wikipedia, Reddit engagement, news mentions, Bing indexing

- Perplexity: Favors G2/Trustpilot profiles, real-time freshness, review presence

- Google AI Overviews: Favors organic rankings, YouTube content, diverse backlinks

- Claude: Favors factual accuracy, clear provenance, helpful content

- All platforms: Require Entity Coherence, Structured Data Layer, Root-Source content

Understanding Platform Citation Patterns enables platform-specific optimization within the broader Root-Source Positioning strategy.

Discussed in: Authority Intelligence · System Architecture

Fan-Out Visibility

Fan-Out Visibility measures how far a source’s content propagates through AI retrieval chains — specifically, whether a source is cited not just in direct responses to queries about its topic, but in responses to adjacent and derivative queries. A source with high Fan-Out Visibility is retrieved across a wide range of query intents because AI systems use it as a reference layer for multiple related topics.

Distinction from Citation Share: Citation Share measures volume (how often cited). Fan-Out Visibility measures reach (across how many query types). A narrow Root-Source may have high Citation Share for one specific query and low Fan-Out Visibility. A central Root-Source has Fan-Out Visibility because its content serves as structural reference for a topic cluster.

DAE connection: Fan-Out Visibility is the M3 measurement instrument for Citation Graph Centrality. High Fan-Out Visibility is the empirical signature of central network position. Building it requires Intent-Layer Architecture that explicitly covers multiple query intents within an entity cluster.

See also: Citation Graph Centrality · Intent-Layer Architecture · Cross-AI Coverage

Citation Accuracy Gap

Citation Accuracy Gap is the empirically documented discrepancy between citation frequency and citation quality in AI-generated responses. The gap quantifies how often AI systems cite sources that do not fully support — or even contradict — the claims they are attached to.

Empirical foundation (RAG-focused medical studies): Wu et al. (Stanford, Nature Communications, April 2025) developed the SourceCheckup framework and found that 50–90% of LLM responses in medical contexts are not fully supported by cited sources. For RAG-enabled GPT-4o with Web Search, approximately 30% of individual statements remain unsupported, and nearly half of responses are not fully supported. Independent validation by medical doctors achieved 88.7% agreement with automated assessments. The Citation Failure study (arXiv 2510.20303, 2025) corroborates this, showing that citation precision degrades with answer length — later citations in a response are less accurate than early ones.

Domain limitation: The 50–90% range derives primarily from medical and RAG-focused benchmarks. In other domains with less technical complexity, the gap may be narrower. The finding applies most directly to RAG-deployed LLM configurations (e.g., GPT-4o with Web Search, Perplexity) rather than purely parametric responses.

Cross-platform validation: The Tow Center for Digital Journalism (Columbia University, March 2025) tested eight generative AI search tools across 1,600+ queries in the news/journalism domain and found that AI search engines produce incorrect citations more than 60% of the time — with error rates ranging from 37% (Perplexity) to 94% (Grok 3).

Why it matters for DAE: Citation Share measures citation frequency — how often a source is mentioned. Citation Accuracy Gap reveals that a significant portion of those citations may not correctly support the claims they are attached to. This does not invalidate Citation Share as a metric, but it introduces a quality dimension: a high Citation Share with low citation accuracy represents visibility without reliability. DAE practitioners should monitor Citation Share alongside citation accuracy to avoid optimizing for noise.

Practical implication: Content that is structurally clear, factually precise, and self-contained at the chunk level reduces the Citation Accuracy Gap — because the AI system can extract and attribute the claim more accurately. Chunk Extractability and Entity-First Writing are the primary instruments. The RAG-Optimized Content Architecture (50–150 word self-contained chunks) directly addresses this gap.

M-Stage: M3+ (relevant once Citation Share measurement is established)

See also: Citation Share · Content Structure Principle · Signal Provenance · RAG-Optimized Content Architecture

Matthew Effect in AI Citations

The Matthew Effect in AI Citations describes the self-reinforcing dynamic whereby sources that are already frequently cited by AI systems receive disproportionately more citations over time — analogous to the sociological Matthew Effect (“the rich get richer”). Algaba et al. (NAACL 2025 Findings) analyzed citation patterns across GPT-4, GPT-4o, and Claude 3.5 and found a remarkable similarity between human and LLM citation patterns, but with a more pronounced high-citation bias that persists even after controlling for publication year, title length, number of authors, and venue. The study also showed that LLMs internalize entire citation networks — not just individual facts — meaning sources that occupy central positions in citation graphs are parametrically favored.

Why it matters for DAE: The Matthew Effect is the empirical mechanism behind the Compound Effect described in DAE. It means that early Root-Source positioning creates a cumulative, self-reinforcing advantage: once an AI system cites you, the citation itself increases the probability of future citations — both through RAG (the citation appears in training data for future models) and parametrically (the entity’s frequency in the corpus increases). Conversely, late entrants face a compounding disadvantage.

Practical implication: The Matthew Effect favors early movers who establish Root-Source status before competitors. It also implies that Third-Party Authority Signals — being cited by sources that are themselves highly cited — is the most efficient path to triggering the effect. Building Citation Graph Centrality accelerates the Matthew Effect.

M-Stage: M4+ (relevant for optimization and competitive positioning)

See also: Citation Graph Centrality · Root-Source Positioning · Third-Party Authority Signals · Competitive Citation Displacement

Level 4: Strategy

Content Resurrection Effect

The Content Resurrection Effect describes the citation recovery pattern observed when existing content is updated with primary data, structural improvements, or fresh evidence. Content that was previously ignored by AI citation systems can achieve Root-Source citation rates within 30 days of substantive update. Onely found 76.4% of most-cited pages were updated within 30 days — the Resurrection Effect exploits this freshness signal by upgrading existing assets rather than creating new ones.

Discussed in: Root-Source Positioning

Triangulation Strategy

The Triangulation Strategy creates citation authority through coordinated presence across three source types: (1) your own domain as Root-Source, (2) third-party mentions that corroborate your expertise, (3) industry databases or directories that establish topical presence. AI systems that encounter a claim from multiple independent sources treat it as higher-confidence information. Triangulation deliberately engineers this multi-source corroboration. Supports Citation Graph Centrality by creating multiple pathways to the same root entity.

Discussed in: Root-Source Positioning

Supported by: Third-Party Authority Signals

Semantic Depth Score

The Semantic Depth Score is an internal evaluation metric for the comprehensiveness of topical coverage within a content cluster. A high Semantic Depth Score indicates that a content cluster covers not just the primary topic but the full range of related concepts, edge cases, and derivative questions. AI systems evaluating content for citation prefer depth indicators over breadth — one comprehensive Root-Source outperforms ten shallow derivatives.

Discussed in: Root-Source Positioning

Core Question Derivation

Core Question Derivation is the process of identifying the specific questions within a domain that AI systems are most frequently asked to answer — and then building Root-Source content that answers those questions with primary data. The derivation process works backward from observed AI citation patterns to identify content gaps. Related to Intent-Layer Architecture, which structures content to address multiple intent variants of the same core question.

Discussed in: Root-Source Positioning

Update Trigger Framework

The Update Trigger Framework defines criteria that signal when existing content requires refresh to maintain Root-Source status: new data is available, competitor cites newer data, citation rate drops >20% over 30 days, AI systems reference older dated versions, or Structural Debt is identified through audit. Operationalizes the Content Resurrection Effect by defining when to trigger it.

Discussed in: Implementation Blueprint

Recency Signals

Recency Signals are freshness indicators that AI systems use to evaluate whether content is current enough to cite. Primary signals: dateModified in structured data, visible “last updated” dates, fresh statistics with current-year citations, changelog or version entries. Onely found 76.4% of most-cited pages updated within 30 days. Without deliberate Recency Signal management, even accurate Root-Source content degrades into Structural Debt as newer derivatives appear.

Empirical Impact:

| Signal Type | Citation Impact | Source |

|---|---|---|

| Visible “Last Updated” date | +30% citation rate | Wellows 2026 |

| Refreshed publication date | +95 position improvement | Ahrefs 2025 |

| Content updated within 13 weeks | Significantly higher citation likelihood | Stackmatix 2026 |

| 85% of AI Overview citations | Published within last 2 years | Seer Interactive 2025 |

Discussed in: Root-Source Positioning

Third-Party Authority Signals

Third-Party Authority Signals are external platform mentions that corroborate entity expertise for AI systems. These signals address the Parametric Knowledge pathway — AI systems trained on these platforms encode third-party mentions as authority corroboration. SE Ranking 2025 documents the correlation between third-party signal density and AI citation rates:

| Platform Type | Examples | Citation Impact |

|---|---|---|

| Review Sites | G2, Trustpilot, Capterra | 3x higher citation probability |

| Community Forums | Reddit, Quora | 4x higher citation probability (with high engagement) |

| Encyclopedia | Wikipedia | Foundational for parametric knowledge |

| Video | YouTube | Top factor for Google AI Overviews |

| Professional Networks | LinkedIn company page | Entity corroboration signal |

Discussed in: Root-Source Positioning · System Architecture

Parametric Correction Strategy

Parametric Correction Strategy addresses an unsolved challenge in AI optimization: how to correct what a model has already learned parametrically — specifically, outdated or incorrect associations about your brand, products, or expertise. Because parametric knowledge encodes from training data (not real-time), corrections require an indirect approach: (1) Create highly authoritative contradictory content that will be encountered during next model training, (2) Amplify that content through Third-Party Authority Signals across platforms that dominate training corpora (Wikipedia, Reddit, major publications), (3) Generate Cross-AI Coverage that establishes the corrected association across RAG-retrieval systems while waiting for parametric re-encoding.

Timeline reality: Parametric correction via content is a 6–18 month effort. RAG-layer correction is achievable within 30 days. Strategy must address both timelines simultaneously.

See also: Knowledge Pathways · Third-Party Authority Signals

Competitive Citation Displacement

Competitive Citation Displacement is the strategic effort to replace an established Root-Source with your own content as the primary AI citation for a specific query or topic. Displacement is harder than initial positioning because AI systems have reinforced patterns for established sources. Displacement requires: (1) Demonstrably superior primary data, (2) More recent evidence with better Recency Signals, (3) Higher Semantic Depth Score, (4) Active Triangulation Strategy generating more third-party corroboration than the target source.

Distinct from Root-Source Positioning: RSP positions you as a source for unclaimed territory. Competitive Citation Displacement actively challenges an existing Root-Source. The latter requires significantly more resources and a longer timeline.

See also: Root-Source Positioning · Triangulation Strategy

AI Visibility Staircase

The AI Visibility Staircase is a diagnostic sequence of seven stages that defines the dependency chain for AI citation readiness. Each stage builds on the previous one — skipping stages leads to structural gaps that limit citation potential regardless of content quality.

Stage 0 — AI Crawl Governance: Can AI systems access your content? Check robots.txt, JavaScript rendering, sitemap. If GPTBot and PerplexityBot cannot crawl you, you do not exist for Path 1.

Stage 1 — Semantic Bridge: Can AI systems understand your content? Schema markup (JSON-LD), semantic HTML, heading hierarchy. Without this layer, your content is raw text without machine-readable meaning.

Stage 2 — Chunk Extractability: Can AI systems extract citable units from your content? Each H2 section should answer a question completely and independently. Front-loading, assertive language, concrete facts.

Stage 3 — Citation Share: Are you actually cited? Test across ChatGPT, Perplexity, Claude, Gemini with your domain’s core questions. This is where DAE measurement begins.

Stage 4 — Two-Path Diagnosis: Are you cited via Path 1 (RAG) only, or also via Path 2 (parametric)? The Temperature-Zero-Test (ask with web search disabled) reveals whether the model knows you from training or only finds you via retrieval.

Stage 5 — Root-Source Positioning: Are you the source — or one of many? Do others use your terminology? Do third parties reference you as the origin? This is the difference between being cited and being the reference layer.

Stage 6 — Entity Coherence: Is your entity consistent across platforms? Website, LinkedIn, Wikidata, Knowledge Panel, industry publications — do they tell the same story? Inconsistencies weaken parametric anchoring.

Stage 7 — Dark AI Traffic Measurement: Can you measure what is invisible? Server-log vs. GA4 gap analysis, Three-Way Traffic Model awareness, Citation Share via Prompt-Testing as the only method unaffected by AI Browser Masking.

Why it matters for DAE: The Staircase provides the diagnostic entry point for organizations at any maturity level. An organization at M0 starts at Stage 0. An organization at M3 may discover gaps at Stage 2 that explain why their Citation Share is lower than expected despite strong content. The sequence is not arbitrary — it follows the technical dependency chain of AI citation systems.

M-Stage: M0+ (the Staircase itself is the diagnostic tool for determining M-Stage)

Discussed in: DAE Blueprint

See also: DAE Maturity Model · AI Crawl Governance · Chunk Extractability · Citation Share · Knowledge Pathways · Root-Source Positioning · Entity Coherence · Dark AI Traffic

Level 5: Architecture

Journalistic Source Principle

The Journalistic Source Principle establishes that every factual claim in DAE-compliant content must trace to a verifiable, citable source — structured identically to journalistic sourcing standards. AI systems that evaluate content for citation increasingly require this traceability. Unsourced claims are treated as unverifiable and carry lower citation weight. Implementation: Every statistic includes study name, year, and URL. Every assertion links to its primary source.

Discussed in: Root-Source Positioning

Content Structure Principle

The Content Structure Principle states that AI systems extract citations disproportionately from early content sections. Growth Memo’s 2026 research (1.2M ChatGPT citations analyzed) found 44.2% of AI-cited content comes from the first 30% of documents. Implication: Structure content for extraction — definitions, key claims, and statistics must appear early. Additional requirements: clear H1/H2/H3 hierarchy, distinct definitional sentences at section start, FAQ sections with explicit Q/A structure, tables for comparative data, and extraction-optimized summary blocks.

Discussed in: Root-Source Positioning · System Architecture

RAG-Optimized Content Architecture

RAG-Optimized Content Architecture is the structural design pattern for content that maximizes citation probability in Retrieval-Augmented Generation systems. Ekamoira 2026 found that 50–150 word self-contained fact-blocks receive 2.3x more citations than unstructured content. Key properties: self-contained paragraph chunks (each 50–150 words, independently comprehensible), front-loaded key claims, embedded definitions, internal Q/A blocks, and explicit entity-to-claim relationships. RAG systems retrieve at chunk level — content optimized at document level but not chunk level will be partially retrieved and partially ignored.

Discussed in: Root-Source Positioning · System Architecture

Multi-Modal Content Integration

Multi-Modal Content Integration is the strategic combination of text, images, video, and structured data within a single content asset to maximize AI citation probability. Wellows 2026 found r=0.92 correlation with AI Overview selection, and 78% of AI Overview featured sources include multi-modal elements. Averi.ai 2026 reports YouTube accounts for 23.3% of Google AI Overview citations.

Implementation components:

- Text — Structured prose with clear headings and citable chunks

- Tables — Structured comparative data (high extraction value)

- Images — Charts, infographics, screenshots with descriptive alt text

- Video — Embedded or linked video content (YouTube strongly favored)

- Structured Data — Schema markup connecting all elements (see: Structured Data Layer)

Platform Variance: Multi-modal integration matters most for Google AI Overviews (video preference 2x higher than AI Mode). ChatGPT and Perplexity show weaker multi-modal signals.

Discussed in: Root-Source Positioning · System Architecture

HITL Architecture (Human-in-the-Loop)

HITL Architecture (Human-in-the-Loop) is a system design where AI assistance operates under human supervision at critical validation points. In DAE context: Research, Drafting, and Optimization may use AI; Validation and Publication require human approval. Creates auditable content where every claim traces to human-verified sources. HITL is a non-negotiable design principle in octyl — it is not configurable.

Discussed in: Implementation Blueprint

Entity Coherence

Entity Coherence is the consistent representation of an entity (person, organization, brand, concept) across all digital touchpoints. AI systems build entity models from structured data, unstructured content, and third-party mentions. Inconsistency creates confusion and reduces citation probability. Averi.ai found 0.334 correlation between brand search volume and citation probability. Entity Coherence is the principle; Entity Architecture is the implementation.

Discussed in: Root-Source Positioning · System Architecture

Implemented as: Entity Architecture · Anti-pattern: Entity Fragmentation · Measured via: oAIS

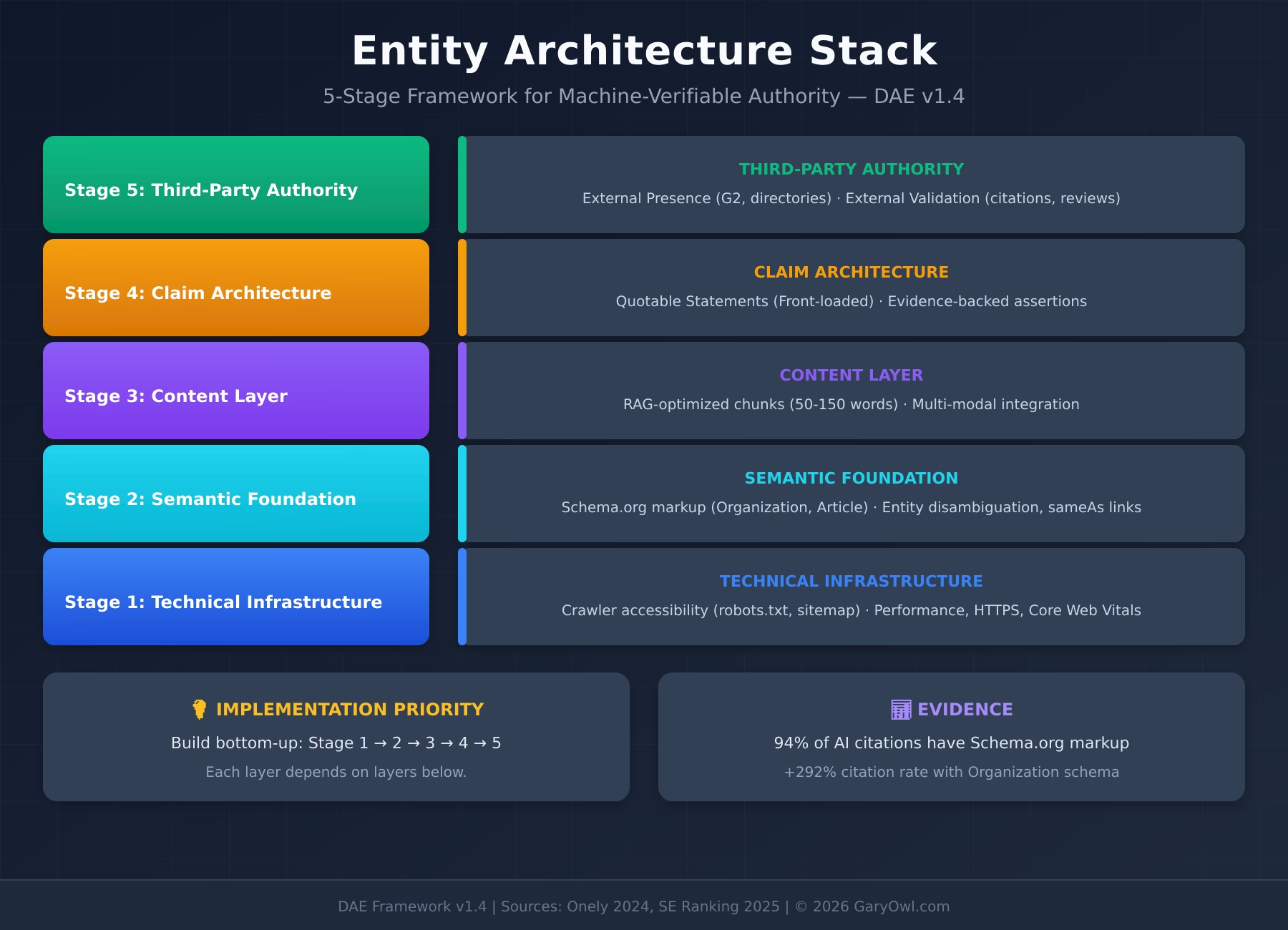

Entity Architecture

Entity Architecture is the systematic structuring of content around defined entities (concepts, brands, products, people) and their semantic relationships, enabling both search engines and LLMs to parse authority signals correctly. Entity Architecture is the technical foundation of Root-Source Positioning — while RSP defines the strategic goal (become the source), Entity Architecture defines the infrastructure (structure content so AI recognizes you as an entity with expertise).

Five components:

- Entity Registry — Canonical definitions for each entity you claim expertise over

- Hub-and-Spoke Content — Hierarchical structure with canonical hub pages and supporting spokes

- Structured Data Layer — Machine-readable entity relationships via Schema.org markup

- Internal Linking Strategy — Expressed relationships through descriptive anchor text and logical connections

- Third-Party Presence — External platform signals that corroborate entity expertise

Relationship to other DAE concepts:

- Entity Coherence is the principle (consistency)

- Entity Architecture (this term) is the implementation (structure)

- Structured Data Layer is the machine interface (recognition)

- Third-Party Authority Signals is the external validation (corroboration)

- Entity Fragmentation is the failure mode (diluted authority)

- Structural Debt is the accumulation risk over time (degradation)

Google’s Knowledge Graph contains 500+ billion entity facts. AI systems parse these relationships when determining citation sources. Content that clearly defines entities and their relationships gets interpreted as authoritative; content that fragments entities across pages gets ignored.

Discussed in: Root-Source Positioning · Implementation Blueprint · System Architecture

Entity Registry

An Entity Registry is the single source of truth for entity definitions, relationships, and implementation standards across a content ecosystem. An Entity Registry documents for each entity: canonical definition, relationship to adjacent entities, primary hub page URL, required schema markup properties, and internal linking standards.

Why it matters: Without centralized governance, teams drift toward inconsistent entity treatment, conflicting definitions, and fragmented authority signals. The Entity Registry prevents Entity Fragmentation by establishing clear standards and ownership.

Contents of an Entity Registry:

| Field | Purpose |

|---|---|

| Entity Name | Canonical term (e.g., “Digital Authority Engineering”) |

| Definition | 1–2 sentence canonical definition |

| Adjacent Entities | Related concepts this entity connects to |

| Hub Page URL | The canonical page for this entity |

| Schema Type | Required markup (Organization, Thing, etc.) |

| Owner | Who can modify this entry |

Discussed in: Implementation Blueprint · System Architecture

Structured Data Layer

The Structured Data Layer is the systematic implementation of Schema.org markup that makes entity relationships and content attributes machine-readable for AI systems. The Structured Data Layer is the technical bridge between human-readable content and machine recognition — without it, even excellent Root-Source content may be overlooked by AI systems that prioritize parseable, verifiable information.

Evidence for importance:

- Microsoft Fabrice Canel (SMX Munich March 2025): “Schema markup helps Microsoft’s LLMs understand content”

- Data World Study: GPT-4 improves from 16% to 54% correct responses with structured data

- SchemaApp Research: LLMs integrated with Knowledge Graphs achieve 300% higher accuracy

Priority Schema Types for DAE:

| Schema Type | Purpose | DAE Application |

|---|---|---|

| Organization | Brand identity, contact, social profiles | Entity Coherence foundation |

| Person | Author credentials, expertise areas | Expert Attribution (RSP characteristic) |

| Article/BlogPosting | Content attributes, author, dates | Content freshness signals, provenance |

| FAQPage | Question-answer pairs | Conversational AI extraction, Featured Snippets |

| HowTo | Procedural content, steps | Methodology documentation, process authority |

| DefinedTermSet / DefinedTerm | Glossary terms and their definitions | Machine-readable term ownership and relationships |

| WebPage | Page purpose, context | Content categorization for LLMs |

Implementation Principles:

- Mirror visible content — Schema must match what users see on page

- Use JSON-LD format — Google’s recommended, separates markup from HTML

- Validate before publishing — Rich Results Test

- Maintain consistency — Same schema patterns across similar page types

- Connect entities — Link Person → Organization → Article relationships

- DefinedTermSet for glossaries — Every glossary term linked via inDefinedTermSet to its canonical source

Common Mistakes:

- Adding schema for SEO without matching content (spam signal)

- Inconsistent schema across pages (Entity Fragmentation in markup)

- Missing dateModified (freshness signal lost)

- No Person schema for authored content (E-E-A-T signal lost)

- No DefinedTerm schema on glossary pages (term ownership unverifiable for AI systems)

Relationship to Entity Architecture: Structured Data Layer is component #3 of Entity Architecture. While Entity Registry defines entities and Hub-and-Spoke structures content, Structured Data Layer makes these relationships machine-parseable. Without structured data, Entity Architecture remains human-readable but machine-opaque.

Discussed in: Root-Source Positioning · Implementation Blueprint · System Architecture

Part of: Entity Architecture · Enables: Entity Coherence · Prevents: Entity Fragmentation

Structural Debt

Structural Debt is the accumulated degradation of a content corpus’s AI citation potential through ungoverned content growth over time. The term is adapted from software engineering’s “technical debt” — just as unrefactored code accrues technical debt that eventually slows development, unaudited content accrues Structural Debt that erodes Citation Share and Root-Source Positioning.

Four manifestations of Structural Debt:

- Cannibalization — Multiple pages competing for the same query, splitting authority signals. Detectable via GSC impression overlap, cosine similarity >0.85, and SERP overlap analysis.

- Content Decay — Pages losing citation relevance due to outdated data, missing freshness signals, or superseded evidence. Detectable via Leading Indicators z-score analysis.

- Duplication — Near-identical content (semantic similarity >0.95) competing within the same corpus. Detectable via SHA-256 (exact) and SimHash Hamming distance ≤3 (near-duplicate).

- Freshness Gap — Systematic lag between competitor content freshness and your own, creating citation disadvantage.

DAE causal chain: AI-accelerated content production without governance → Structural Debt accumulates → Entity Fragmentation increases → Retrieval opacity increases → Citation Share declines → Root-Source status erodes.

Audit approach: Structural Debt is not visible in surface metrics. It requires explicit corpus-level audit using embedding similarity analysis, citation rate tracking per page cluster, and comparative freshness analysis. See Root-Source Audit.

See also: Entity Fragmentation · Root-Source Audit · Entity Architecture

Canonical Facts Graph

A Canonical Facts Graph is a structured network of machine-verifiable factual claims organized around a central entity, where each node is a discrete, citable fact and each edge represents a verifiable relationship between facts. Unlike a knowledge graph (which maps entity relationships), a Canonical Facts Graph maps claim relationships — optimized for AI extraction at the fact level rather than the entity level.

Why it matters: AI systems retrieve facts, not documents. A source that organizes its claims as an explicit, machine-parseable facts network creates a retrieval advantage over sources with equivalent information distributed across unstructured prose. The Canonical Facts Graph is the highest-precision Root-Source architecture.

Implementation: Each fact is structured as a self-contained, independently verifiable claim with: source citation, date of validity, confidence level, and relationship links to adjacent claims. Schema.org Claim and DefinedTerm types provide the markup layer.

Discussed in: Article 8 — Canonical Facts Graph

See also: Structured Data Layer · Entity Architecture · RAG-Optimized Content Architecture

Entity-First Writing

Entity-First Writing is a content production methodology where every paragraph is written from the perspective of a specific, defined entity — ensuring that AI systems can attribute claims to their source entity rather than treating content as floating, unattributed text. In standard content production, claims are made in the context of a topic. In Entity-First Writing, claims are made as properties of defined entities.

Practical difference:

- Standard: “Studies show that structured data improves AI citation rates by up to 300%.”

- Entity-First: “The DAE Structured Data Layer, as documented by GaryOwl.com, is supported by SchemaApp research showing 300% accuracy improvements for LLMs integrated with Knowledge Graphs.”

The second version attaches the claim to a defined entity (DAE Structured Data Layer, GaryOwl.com) with a traceable source. AI systems extracting this claim can attribute it with full provenance.

See also: Entity Architecture · Signal Provenance · Canonical Facts Graph

Intent-Layer Architecture

Intent-Layer Architecture is the systematic coverage of all intent variants for a given entity or topic, ensuring that a single Root-Source can serve as citation target across multiple query types. AI systems retrieve sources differently depending on whether a query is definitional (“what is X”), procedural (“how to do X”), evaluative (“is X effective”), or comparative (“X vs Y”). A Root-Source that only answers one intent variant is cited less broadly than one that explicitly addresses all variants.

Implementation: For each core topic, map the intent tree: definition → mechanism → use cases → comparison → objections → how-to → examples. Build explicit content sections for each variant, with consistent entity attribution throughout. Enables Fan-Out Visibility by ensuring the source is retrievable across the full query spectrum for a topic.

See also: Fan-Out Visibility · Core Question Derivation · RAG-Optimized Content Architecture

AI Crawl Governance

AI Crawl Governance is the systematic management of how AI crawlers access, index, and retrieve your content through configuration of crawl directives. As AI systems use distinct crawlers (GPTBot, PerplexityBot, ClaudeBot, Google-Extended), governance requires deliberate per-crawler decisions rather than treating all bots identically.

Core instruments:

- robots.txt — Crawler access control per bot; default allow for AI crawlers unless content is sensitive

- llms.txt — Emerging standard for machine-readable content summaries and permissions (analogous to robots.txt but optimized for LLM consumption)

- llms-full.txt — Extended LLM content manifest with full page content in LLM-optimized format

- Crawl budget management — Server-side log analysis (see Telemetry Layer) to identify which content AI systems prioritize

Governance principle: Blocking AI crawlers prevents citation. Under-governing AI crawlers may expose content intended for human audiences only. Deliberate governance maximizes citation surface while maintaining content control.

See also: Dark AI Traffic · Telemetry Layer · AI Discovery Infrastructure

On-Page Authority Signals

On-Page Authority Signals are classical SEO implementation elements that, within DAE, serve a dual function: improving human-search discoverability AND providing AI systems with structured authority markers. DAE does not replace classical SEO — it locates it within the broader authority architecture.

Core signals and DAE function:

| Signal | SEO Function | DAE Function |

|---|---|---|

| Title tag | Click-through rate, ranking | Entity-query match signal for AI indexing |

| Meta description | Search snippet | RAG-layer summary extraction surface |

| Focus keyword | Topical relevance | Entity-intent alignment marker |

| H1 / heading hierarchy | Crawl structure | Chunk boundary definition for RAG retrieval |

| Author byline | Attribution | Expert Attribution signal (RSP characteristic #3) |

| Author schema | Rich results | Person entity → Article entity → Organization entity chain |

| dateModified | Freshness signal | Recency Signals for AI citation evaluation |

| Internal links | Page authority distribution | Entity relationship graph for AI systems |

Why this belongs in L5 Architecture: On-Page Authority Signals are not standalone tactics — they are implementation points within the broader Entity Architecture. A title tag without an author schema is an incomplete authority signal. All elements must connect to form a coherent machine-readable authority chain.

See also: Entity Architecture · Recency Signals · Author Entity Architecture

AI Browser Masking

AI Browser Masking is the phenomenon whereby AI-powered browsers (ChatGPT Atlas, Perplexity Comet, Dia) use identical Chrome user-agent strings, block tracking scripts, auto-reject cookie consent, and operate in sandboxed sessions — making human visits through these browsers indistinguishable from normal Chrome traffic in both GA4 and server logs. Unlike traditional AI crawler traffic (which is identifiable via user-agent strings like ChatGPT-User or PerplexityBot), AI Browser Masking affects real human visits that are mediated by AI tools.

Four documented behaviors constitute AI Browser Masking:

- Script-Blocking: Perplexity Comet integrates uBlock Origin and blocks all tracking scripts (GTM, GA4, pixels) by default. In documented tests, zero tracking requests were sent.

- Cookie-Consent Automation: ChatGPT Atlas automatically clicks “Reject All” on cookie consent banners.

- Chrome UA Identity: Both browsers report as Chrome version 141.x/142.x — identical to standard Chrome.

- Sandboxed Sessions: GA4 cookies are not transferred from Chrome to Atlas. Every Atlas user appears as a new visitor.

Why it matters for DAE: AI Browser Masking creates a new category of Dark AI Traffic that is invisible not only in GA4 but partially also in server logs. The traditional Dark AI Traffic definition (bot crawl without GA4 visibility) must be extended to include human visits through AI browsers that cannot be distinguished from regular Chrome traffic. This compounds the measurement challenge: neither GA4 nor server logs can fully quantify AI-mediated traffic.

Scale: Cyberhaven Labs (October 2025) found 27.7% of enterprises had at least one employee who installed ChatGPT Atlas within weeks of launch. Atlas achieved 62× more downloads than Comet.

M-Stage: M2+ (relevant as soon as AI traffic measurement begins)

Sources: Taggrs (2026) · Cyberhaven Labs (2025) · Kick Point Analytics (2025) · Didomi (2026)

See also: Dark AI Traffic · AI Crawl Governance · Telemetry Layer

Three-Way Traffic Model

The Three-Way Traffic Model distinguishes three technically different routes through which AI-mediated traffic arrives at a website, each with distinct tracking behavior:

Way 1 — AI Bot Fetch (RAG): The AI system (ChatGPT-User, PerplexityBot) fetches the page server-side. No JavaScript execution, no browser involved. Server log: visible with identifiable user-agent. GA4: invisible. Identifiable as AI: yes.

Way 2 — Link Click from AI Interface: A human clicks a link within the ChatGPT or Perplexity web interface. The link opens in the user’s regular browser (Chrome, Safari). Server log: visible as normal page request. GA4: visible with referrer (chatgpt.com, perplexity.ai). Identifiable as AI: yes, via referrer.

Way 3 — AI Browser as Daily Driver: A human uses Atlas or Comet as their default browser and navigates independently — via bookmarks, search engine, or direct URL entry. Server log: visible, but user-agent identical to Chrome. GA4: invisible or distorted (script-blocking, cookie rejection, no referrer). Identifiable as AI: no.

Why it matters for DAE: The three ways coexist, but with increasing Atlas/Comet adoption, the share shifts from Way 2 (identifiable) to Way 3 (invisible). Users who previously clicked links from the ChatGPT interface in their regular browser now use Atlas as their daily browser — and the referrer path disappears. This explains why sites may observe declining ChatGPT/Perplexity referrers in GA4 while server-log AI activity remains stable and Chrome sessions increase.

M-Stage: M2+ (relevant for measurement setup and traffic analysis)

Sources: Synthesized from MarTech.org (2025) and sources documented in AI Browser Masking.

See also: Dark AI Traffic · AI Browser Masking · Crawl-to-Referral Ratio

Level 6: Validation

Originality Prompt

The Originality Prompt is the validation question for Root-Source potential: “What information in this content could only exist because we created, measured, or experienced it?” Strong pass = clear primary data. Weak pass = synthesis of existing work. Fail = rewriting what others published. The Originality Prompt connects to Experiential Authority — documented first-hand experience is the strongest pass condition.

Discussed in: Root-Source Positioning

Signal Provenance

Signal Provenance is the verifiable chain of attribution from a claim back to its original source. In DAE, every factual statement must carry traceable provenance — enabling AI systems to evaluate source reliability and attach citations accurately.

Research foundation: Provenance-aware RAG systems increasingly prioritize sources with explicit attribution chains. The Citation Failure study (arXiv, 2025) demonstrates that citation precision correlates with source provenance clarity — claims with explicit attribution to named studies, dated observations, or documented methodologies receive more accurate citations than floating assertions. This aligns with broader E-E-A-T implementation research showing that verifiable author identity and source traceability are emerging ranking signals in AI retrieval systems.

Practical implementation: Signal Provenance requires: named sources with URLs, publication dates, author credentials, and methodology disclosure. The Journalistic Source Principle operationalizes this — every statistic includes study name, year, and URL. Signal Provenance is the validation mechanism for Experiential Authority — documented experience requires traceable evidence (dates, conditions, outcomes).

Discussed in: Root-Source Positioning

See also: Experiential Authority · Journalistic Source Principle · Citation Accuracy Gap

Cross-Reference Validation

Cross-Reference Validation is the process of verifying claims against multiple independent sources before publication. Part of the RAG-Pre-Pipeline that ensures only validated content enters the system. Connects to HITL Architecture — human validators execute Cross-Reference Validation before publication approval.

Discussed in: Implementation Blueprint

Cross-AI Synthesis

Cross-AI Synthesis is a testing methodology: Run identical prompts across ChatGPT, Claude, Perplexity, and Gemini, then synthesize findings. Identifies platform-specific citation behavior and opportunities. Use Platform Citation Patterns to interpret results and prioritize platform-specific optimizations. Feeds back into Parametric Correction Strategy when cross-platform discrepancies reveal parametric misconceptions.

Discussed in: Authority Intelligence

Root-Source Audit

A Root-Source Audit is a systematic evaluation of existing content against the four Root-Source characteristics AND against Structural Debt manifestations. Categorizes content as: Root-Source, Near Root-Source (gaps addressable), Strong Derivative, or Weak Derivative. V1.7 addition: Root-Source Audit now explicitly includes Structural Debt screening — Cannibalization, Decay, Duplication, and Freshness Gap analysis run alongside the four RSP characteristics.

Discussed in: Root-Source Positioning · Implementation Blueprint

See also: Structural Debt · Entity Fragmentation

Entity Fragmentation

Entity Fragmentation is an anti-pattern — the failure mode of Entity Architecture where the same conceptual entity gets defined inconsistently across multiple pages, diluting authority signals rather than concentrating them. Entity Fragmentation is the “silent killer” of topical authority — it happens gradually as content libraries grow without entity governance. Structurally, it is the most common symptom of accumulated Structural Debt.

How it occurs:

- Different writers define the same concept differently

- Product updates create pages that overlap with existing entity coverage

- Content audits fail to identify competing pages for the same entity

- Multiple “hub pages” emerge for concepts that should have one canonical source

- AI-accelerated content production without governance

Symptoms:

- Same entity mentioned across 10+ pages with inconsistent definitions

- No clear hierarchy between related entity pages

- Internal links use different anchor text for the same concept

- AI systems cite competitors instead of you for concepts you cover extensively

Prevention:

- Maintain an Entity Registry with canonical definitions

- Implement editorial gates that check entity consistency before publication

- Conduct quarterly Entity Audits to identify fragmentation

- Consolidate competing pages through redirects and content merging

Impact: Teams that consolidate fragmented entity content typically see 40–60% ranking improvements within 90 days because authority signals concentrate rather than divide.

Discussed in: Implementation Blueprint · System Architecture

Root cause: Structural Debt · Prevention: Entity Registry

Level 7: Implementation

DAE Maturity Model

The DAE Maturity Model is the organizational readiness framework for Digital Authority Engineering, developed by Manuel Hürlimann (GaryOwl.com). It assesses an organization’s AI visibility capability across six stages from M0 (Unaware) to M5 (Leading). The M-notation deliberately distinguishes maturity stages from the L1–L7 levels of the DAE Glossary taxonomy.

| Stage | Name | Characteristics | Key Terms at This Stage |

|---|---|---|---|

| M0 | Unaware | No AI visibility distinction from SEO | GEO/AEO/LLMO (awareness start) |

| M1 | Aware | Concept recognized, manual testing | Recency Signals, AI Crawl Governance, AI Discovery Infrastructure |

| M2 | Experimenting | Tools adopted, no RSP strategy | oAIS, On-Page Authority Signals, Entity Coherence, Author Entity Architecture |

| M3 | Systematic | Regular Citation Share measurement, RSP defined, Entity Registry established, Structured Data Layer implemented | Citation Share, Triangulation Strategy, Structural Debt screening, Intent-Layer Architecture |

| M4 | Optimizing | Continuous improvement, Root-Sources producing, Entity Architecture maintained | Canonical Facts Graph, Fan-Out Visibility, Competitive Citation Displacement, Vector Similarity Implementation |

| M5 | Leading | Industry Root-Source status, Citation Magnet ratio >1.0, Third-Party Authority Signals established | Citation Graph Centrality, Parametric Correction Strategy |

Discussed in: Implementation Blueprint

DAE Implementation Blueprint

The DAE Implementation Blueprint is the operational execution plan for the Digital Authority Engineering framework by GaryOwl.com — structured into three tracks with defined FTE allocations and milestones: Foundation (24 weeks, 0.9 FTE, M0→M3), Acceleration (16 weeks, 2.25 FTE, M2→M4), Leadership (52 weeks, 5.5 FTE, M3→M5). Includes Entity Architecture setup, Structured Data Layer implementation, AI Crawl Governance, and Third-Party Authority Signals strategy.

Discussed in: Implementation Blueprint

octyl Authority Learning Loop

The octyl Authority Learning Loop is a continuous improvement methodology developed by octyl® (GaryOwl.com) within the DAE framework: Discover (identify highly-cited sources) → Extract (analyze citation patterns) → Apply (implement in new content) → Validate (test across platforms) → Refine (update patterns). The Loop uses the DAE Glossary DefinedTermSet as its structured knowledge base — recognizing relevant terms by M-stage and L-layer, proposing tool usage, with human confirmation at each decision point (HITL Architecture).

Discussed in: Authority Intelligence

octyl Citation Type Taxonomy

The octyl Citation Type Taxonomy is a six-tier source classification by authority weight, developed by octyl® (GaryOwl.com) as a DAE-native instrument: (1) Primary Research, Official Docs = Highest. (2) Expert Opinions, Industry Reports = High. (3) Quality Journalism, Trade Publications = Medium-High. (4) Educational Content, Reference Sites = Medium. (5) Blogs, Forums, User Content = Low. (6) Commercial, Promotional = Lowest. Used by oCQS for citation quality weighting.

Discussed in: Authority Intelligence

octyl Authority Pattern Recognition

octyl Authority Pattern Recognition is an internal system developed by octyl® (GaryOwl.com) within the DAE framework that identifies structural and content patterns correlating with citation success. It learns from high-performing content to inform content creation. Navigates DAE framework layers (L1–L7), recognizes M-stage context, and surfaces relevant tool recommendations — always under human oversight. Part of the octyl™ Toolset — not available for purchase.

AI Discovery Infrastructure

AI Discovery Infrastructure is the foundational technical layer that enables AI crawlers to find, index, and prioritize your content for retrieval. Distinct from AI Crawl Governance (which controls how crawlers access content), AI Discovery Infrastructure determines whether AI systems can discover your content in the first place.

Core components:

| Component | Function | AI Relevance |

|---|---|---|

| sitemap.xml | Machine-readable URL inventory | AI crawlers use sitemaps to prioritize crawl queue |

| sitemap.html | Human-accessible site overview | Indirect discoverability signal |

| feed.xml (RSS/Atom) | Real-time content updates | Freshness signal for crawlers monitoring feeds |

| lastmod in sitemap | Page modification timestamp | Crawl prioritization for changed content |

| llms.txt | LLM-specific content manifest | Directs AI systems to preferred content paths |

| Canonical tags | Duplicate content resolution | Prevents authority splitting across URL variants |

Technical requirements: SE Ranking 2025 found that pages with First Contentful Paint (FCP) under 0.4 seconds receive 3x higher citation rates. Mobile optimization is equally critical — AI systems prioritize mobile-first indexed content.

M-Stage relevance: AI Discovery Infrastructure is an M1–M2 priority because it has no prerequisites and maximum leverage — a site not properly discoverable by AI crawlers cannot be cited regardless of content quality.

See also: AI Crawl Governance · Dark AI Traffic · Telemetry Layer

Author Entity Architecture

Author Entity Architecture is the systematic construction of a person entity hub that connects an author’s content, credentials, and third-party presence into a machine-readable authority network. In AI citation systems, author attribution is not just a human-readable byline — it is an entity relationship that AI systems evaluate when determining citation trustworthiness.

Core structure:

- Author Hub Page — Canonical author page (/about/[author-name]/) functioning as entity hub; all authored content links back to it

- Person Schema — Full schema.org/Person markup: name, jobTitle, worksFor, knowsAbout, sameAs links

- sameAs Connections — Explicit links to same-person presence on LinkedIn, Twitter/X, Wikipedia (if notable), Google Scholar, ORCID

- Content Attribution Chain — Every Article schema links author → Person entity → Organization entity

- Third-Party Corroboration — External mentions of author expertise (conference talks, quoted in publications, industry profiles)

Why it matters for DAE: Expert Attribution is RSP characteristic #3. Without explicit Author Entity Architecture, a credentialed author is invisible to AI systems as an authority signal. The author’s expertise must be machine-readable, not just human-readable.

See also: Root-Source Positioning · Experiential Authority · Third-Party Authority Signals · On-Page Authority Signals

Vector Similarity Implementation

Vector Similarity Implementation refers to the practical deployment of dense vector search systems (FAISS, HNSW) to analyze semantic similarity relationships within a content corpus — enabling corpus-level Structural Debt detection and content clustering that mirrors how AI retrieval systems actually process content.

Why it belongs in DAE (M4–M5): AI systems that use dense retrieval (most RAG implementations) evaluate content similarity at embedding level, not keyword level. A content corpus with high semantic overlap — invisible to traditional duplicate detection — appears highly fragmented to embedding-based retrieval. Vector Similarity Implementation enables organizations to see their corpus as AI systems see it.

Practical application:

- Cannibalization detection: multilingual-e5-large-instruct embeddings + cosine similarity >0.85 identifies competing pages

- Near-duplicate detection: SimHash 64-bit, Hamming distance ≤3

- Semantic duplicate detection: Cosine similarity >0.95

- Content clustering: BERTopic (UMAP + HDBSCAN + c-TF-IDF) for topic architecture visualization

- Scale: FAISS+HNSW index enables analysis of 5000+ pages at M5 scale

See also: Structural Debt · Entity Fragmentation · Root-Source Audit

Frequently Asked Questions

What is the difference between DAE and GEO/AEO/LLMO?

GEO, AEO, and LLMO are optimization tactics — they improve how existing content performs in AI systems. DAE operates at paradigm level: it defines what authority is, how it is constructed, and how it is measured. GEO/AEO/LLMO are more effective when applied within a DAE framework because they optimize infrastructure that is already architecturally sound. Without DAE, you are optimizing content that may be undermined by Structural Debt, Entity Fragmentation, or poor AI Discovery Infrastructure.

Where do I start if my organization is at M0?

Three actions with no prerequisites: (1) Implement AI Discovery Infrastructure — sitemap.xml with lastmod, feed.xml, correct robots.txt entries for AI crawlers. (2) Add Person and Organization schema to your primary pages (Structured Data Layer basics). (3) Audit your top 10 pages against the Originality Prompt. These three actions cover M0→M1 with minimal FTE investment.

What is Structural Debt and how do I know if I have it?

Structural Debt is the accumulated degradation of citation potential from ungoverned content growth. You likely have it if: multiple pages cover the same topic with different definitions, your blog has grown significantly without systematic entity governance, or your Citation Share has been declining despite content production. Quantify it via Root-Source Audit with embedding similarity analysis.

How does octyl relate to the DAE framework?

octyl® is a toolset developed by GaryOwl.com that navigates the DAE framework as its knowledge base. octyl does not replace DAE — it implements DAE processes with AI assistance under HITL Architecture. The DAE Glossary DefinedTermSet (this document’s machine-readable schema) serves as octyl’s structured knowledge source, enabling it to recognize relevant terms by M-stage and L-layer. octyl terms (oAIS, oCQS, etc.) are located within DAE layers at their relevant positions.

What is the relationship between Citation Share and Fan-Out Visibility?

Citation Share measures volume: how often you are cited within your domain. Fan-Out Visibility measures reach: across how many distinct query types you are cited. A narrow specialist may have high Citation Share for one specific query and low Fan-Out Visibility. A Root-Source with Citation Graph Centrality has both — it is cited frequently AND across a wide range of related queries. Building Fan-Out Visibility requires Intent-Layer Architecture and broader topical coverage.

How does DAE address parametric knowledge — information already encoded in AI models?

This is the hardest problem in AI authority optimization. Knowledge Pathways explains the distinction: RAG-layer corrections take 30 days; parametric corrections take 6–18 months. The Parametric Correction Strategy describes the approach: create authoritative contradictory content → amplify via Third-Party Authority Signals on Wikipedia, Reddit, and major publications → generate Cross-AI Coverage via RAG while waiting for parametric re-encoding. There is no shortcut for parametric correction — only systematic, sustained authority building.

Entity Architecture Quick Reference

For teams implementing Entity SEO within the DAE framework:

The Entity Architecture Stack

| Layer | Component | Function |

|---|---|---|

| 5 | Third-Party Authority Signals | Review sites, Reddit, Wikipedia, YouTube + external validation |

| 4 | Structured Data Layer (Technical) | Organization, Person, Article, DefinedTermSet, FAQ… |

| 3 | Internal Linking (Relational) | Descriptive anchors, hub-spoke links |

| 2 | Hub-and-Spoke Content (Structural) | Canonical hubs + supporting spokes |

| 1 | Entity Registry (Governance) | Canonical definitions, ownership |

Layers 2–3 are components of Entity Architecture without dedicated glossary entries.

Diagram: Entity Architecture Stack — 5-Stage Framework for Machine-Verifiable Authority (© 2026 GaryOwl.com)

Knowledge Pathways Quick Reference

| PARAMETRIC (60%) | RAG-HYBRID (30%) | RAG-FIRST (10%) | |

|---|---|---|---|

| Mechanism | Training data only | Blended: parametric + retrieved | Real-time retrieval with visible citations |

| Storage | Encoded in model weights | Weights + live retrieval | Fetched in real-time |

| Update Speed | Months to years | Days to weeks | Real-time |

| Citation Behavior | No visible citations | Selective citations | Explicit URL citations |

| Favors | Wikipedia, established brands, long-standing authority | Authoritative + fresh content | Structured, fresh, Schema-optimized |

| Optimize via | Brand building, Wikipedia presence, Third-Party Signals | Both parametric + RAG strategies | Structured Data, RAG-Optimized structure, freshness |

| Correct via | Parametric Correction Strategy (6–18 months) | Combined approach | Fresh content + Schema (30 days) |

| Examples | ChatGPT (no search), Claude (no tools) | ChatGPT with browsing, Gemini | Perplexity, Google AI Overviews |

Root-Source Positioning requires ALL THREE pathways.

Knowledge Pathways Quick Reference — DAE Glossary v1.7

Platform Citation Patterns Quick Reference

| If optimizing for… | Prioritize… |

|---|---|

| ChatGPT | Wikipedia, Reddit engagement, news mentions, Bing indexing |

| Perplexity | G2/Trustpilot profiles, real-time freshness, review presence |

| Google AI Overviews | Organic rankings, YouTube content, diverse backlinks |

| Claude | Factual accuracy, clear provenance, helpful content |

| All platforms | Entity Coherence, Structured Data, Root-Source content, AI Discovery Infrastructure |

Platform Citation Patterns Quick Reference — DAE Glossary v1.7

Entity Architecture vs. Entity SEO Terminology

| Entity SEO Term | DAE Term | Notes |

|---|---|---|

| Entity SEO | Entity Architecture | DAE uses “Architecture” to emphasize structure over optimization |

| Knowledge Graph Optimization | Entity Coherence | Coherence is the principle, Architecture is the implementation |

| Topic Clustering | Hub-and-Spoke Content (see Entity Architecture) | DAE emphasizes entity relationships, not just topics |

| Entity Disambiguation | Entity Registry | Registry prevents ambiguity through canonical definitions |

| Semantic Authority | Root-Source Positioning | RSP is the strategic goal that Entity Architecture enables |

| Schema Markup | Structured Data Layer | DAE frames it as an architectural layer, not a standalone tactic |

| Entity Fragmentation | Entity Fragmentation | Same term — the universal anti-pattern |

| Off-page SEO | Third-Party Authority Signals | DAE focuses on authority signals, not link building |

| Technical Debt (content) | Structural Debt | DAE adaptation of software engineering concept for content corpus |

| Author E-E-A-T | Author Entity Architecture + Experiential Authority | DAE separates structural implementation from signal type |

Entity Architecture vs. Entity SEO Terminology — DAE Glossary v1.7

Sources

Primary Research (Academic)

- Aggarwal, P. et al. (2024). “GEO: Generative Engine Optimization.” Princeton University & IIT Delhi, KDD 2024. https://arxiv.org/abs/2311.09735

- Kumar, A. & Palkhouski, L. (2025). “AI Answer Engine Citation Behavior: GEO-16 Framework.” UC Berkeley & Wrodium Research. https://arxiv.org/abs/2509.10762

Industry Studies (Core)

- Growth Memo (Kevin Indig, 2026). “The 44.2% Pattern.” https://www.growth-memo.com/p/the-science-of-how-ai-pays-attention

- Averi.ai (2026). “B2B SaaS Citation Benchmarks Report — 680M Citations Analyzed.” https://www.averi.ai/how-to/chatgpt-vs.-perplexity-vs.-google-ai-mode-the-b2b-saas-citation-benchmarks-report-(2026)

- Ekamoira (2026). “LLM Citation Tracking: How AI Systems Choose Sources.” https://www.ekamoira.com/blog/ai-citations-llm-sources

- Writesonic (2025). “LLM Citation Study: 282M Citations Across 18 Industries.” https://writesonic.com/blog/llm-ai-search-industry-citation-study

- Onely (2024/2025). “LLM Ranking Factors.” https://www.onely.com/blog/llm-friendly-content/